As I’ve written about previously, for better or worse, Google Photos is the initial destination of all the family photos and videos we take as well as the source of truth for the albums I sort them into. I’ve tried every consumer photo organization tool/website/app on the market, and nothing comes as close to hitting my feature requirements as Google Photos. (Well, short of building my own solution, but that’s…uh…not yet.)

Photos is the only Google product I use. (Besides search – sigh). I gave up Gmail years ago because even with full backups of all my messages, my email address itself is the key to nearly every other online account. The chance of getting locked out due to an automated flag is too high – even if I am a paying customer. But if I lost access to my photo library stored with Google? That would be bad, but not the end of the world since I have all that data backed up.

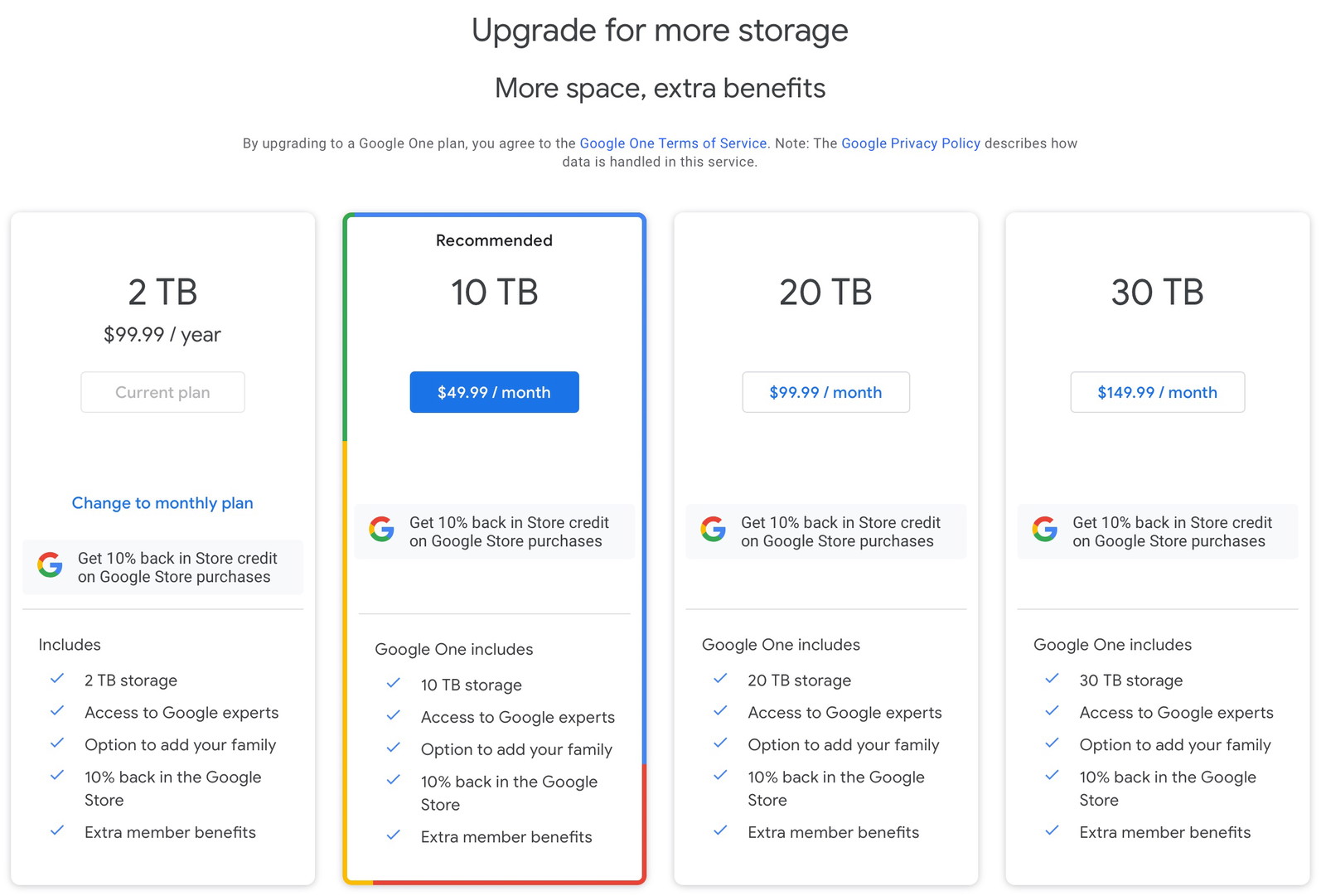

However, I’m always playing the long game and thinking about contingency plans with data this important. Chief among them is my looming monthly price increase apocalypse. I’m currently paying Google $99/year for 2TB of storage space. When I hit that limit, the next tier is 10TB for $600/year. That’s a hell of a jump for that next byte. And while I totally get the business reasons behind that pricing, sheesh.

So I’ve been thinking about my eventual exit strategy. The obvious next and most comparable choice is Amazon Photos. (I’m keeping a close eye on PhotoPrism.) They solve the storage pricing problem because they’re Amazon and just charge you an extra 1TB at a time as your needs increase.

I’m perfectly willing to pay for what I use, so that’s great. And Amazon’s website and apps are actually better than Google’s in many ways. But they do fall on their face as soon as you start dealing with videos larger than 2GB (easy to do with kids and a modern iPhone shooting 4K video) or over 20 minutes long.

So, I’m keeping a very close watch on Amazon and hoping they improve enough to be the right solution in the future.

Anyway, the point of this blog post is to say that I’m preparing for an eventual move to another photo cloud service. I’m also trying to keep my local backups neatly organized. So, I wrote a small command-line tool to specifically deal with the Google Photos backup format that you’ll receive if you request a dump of your data.

It takes Google’s directory structure and all their duplicated files, merges, sorts, and deduplicates your photos and videos into a sane folder structure – the one I’ve been using for over a decade.

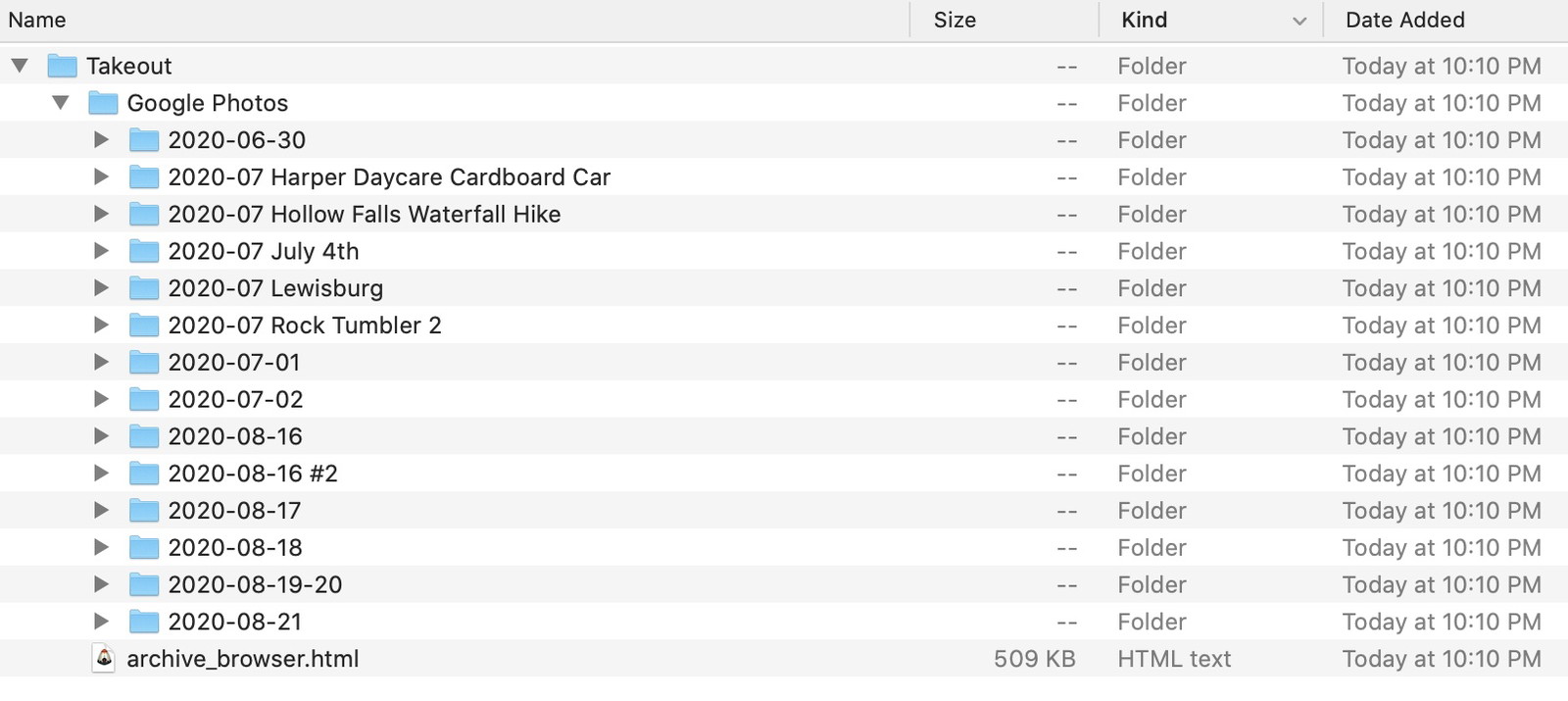

You can request a dump of all of your Photos or just specific albums/dates using Google Takeout. (Kudos to Google for making this sort of stuff so easy.) It works great, and you’ll get everything. The problem is the backups are structured as if you’ll only ever do a single backup in your life – as opposed to incremental ones. And they also don’t deduplicate your data before sending it to you. (I understand why.)

Once your backup is ready, you’ll get everything split into 50GB .tgz files. Each one will extract into the following directory structure:

You’ll get a folder for every item’s capture date. If you took 50 photos on August 15, 2020, you’d get a folder named “2020-08-15”. Except, for reasons I don’t understand, you’ll occasionally get a folder named “2020-08-15 #2”, too. Same day, another folder.

Google will also create folders for every album included in your backup. Perfect. But any items in those albums (folders) will be duplicated in their respective date folders, too. So, if you have a 2GB video included in two albums, it’ll be in three backup folders, which means 6GB of space.

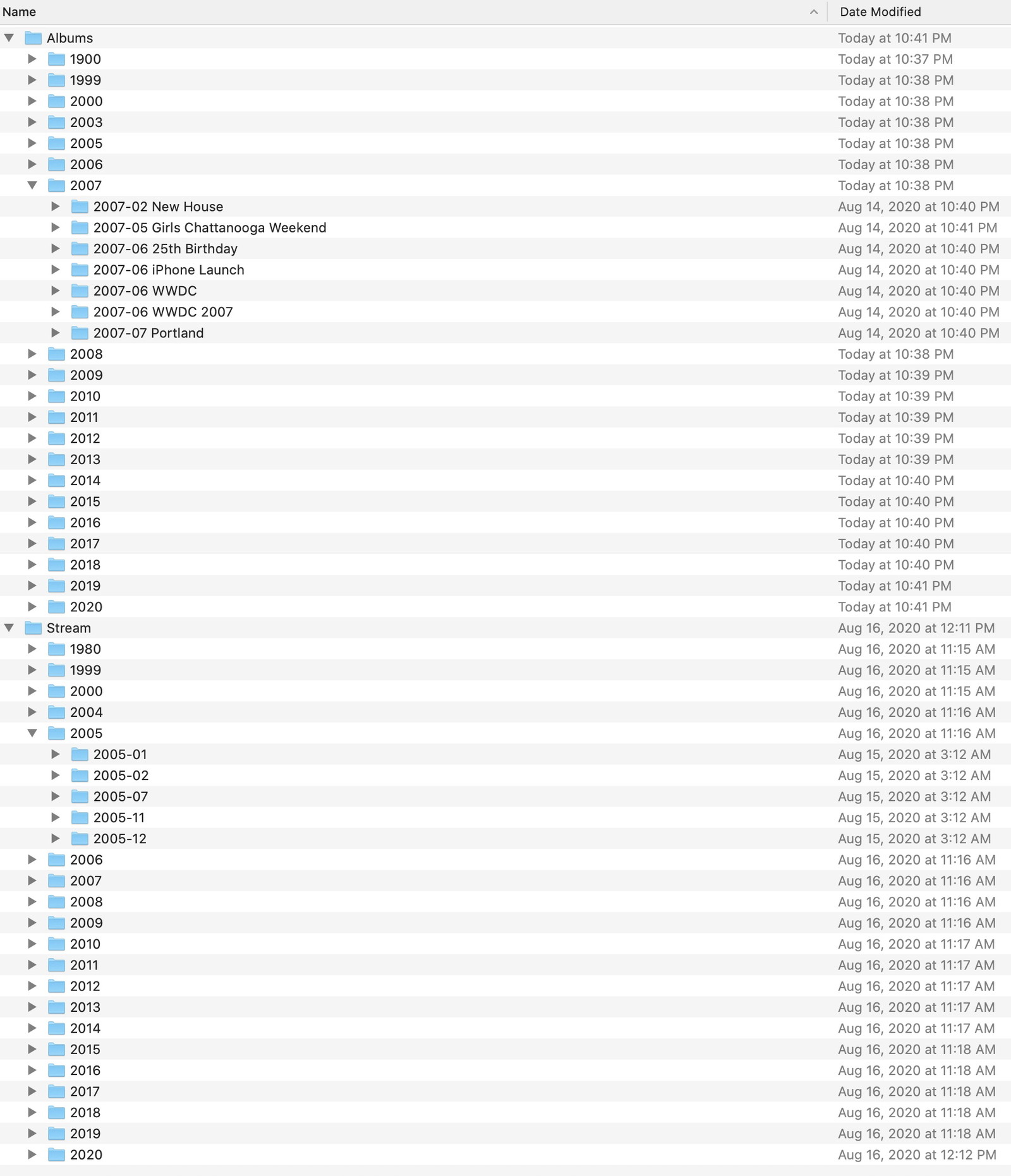

And this is totally fine. It’s definitely the most flexible and complete solution and leaves it to the end-user to figure out what to do with all this data. And what I want to do is convert everything into a structure that looks like…

…along with all of my items deduplicated. So, any items that are included in an album are not duplicated in a year-month folder. Those date-based folders only contain items that are not sorted into albums.

I spent a few hours messing around with various bash scripts and some StackOverflow posts but realized I needed something better than any shell script I could write. (I’m sure someone could write it, though.)

I came up with a tiny Swift command-line tool that scans all of your files and stores a hash of each into a sqlite database. (Thanks, Gus!) Then, figures out where each file belongs, moves it there, and ignores any duplicates it finds.

As you might imagine, it’s not the fastest process, but the results were worth it for me. My iMac Pro was able to scan and process my 1.4TB library (100k+ files) on an external USB3 spinning drive overnight. (Sorry, I should have timed it. I think it was between 4 – 8 hours.)

I’m quite pleased with the results. Not only did it clean up all of the per-day folders into a more sane by-month directory structure, but removing duplicates cut the total file size by 30%.

Running the tool is a two-step process.

First, you need to import your Google Photos’ backups into your library – a shared folder where all of your photos and videos are kept. The reason for this step is that Google’s backup structure will often contain duplicate folder names in addition to filenames. And since merging folders on macOS is a delicate process, the import step will do that for you.

Just run this

photoz import /path/to/google/photos/backup /path/to/libraryon each of the .tgz files that Takeout gives you.

After everything is merged in, run

photoz organize /path/to/libraryand wait.

If all goes well, you’ll end up with a sane by-month/album folder structure that uses considerably less space.

The code is open source. Fixes and improvements are welcome. I haven’t cleaned it up much since first writing it, but it’s only three Swift files, so it should be easy to dive into and customize to your liking.

And while it works for me and I did a crap ton of dry-runs and testing building this tool, please, please, please make backups of your data before running an internet stranger’s code over something as important as your photo library.